|

OpenCL - F32 (OpenCL kernel profiling): ./benchmark_graph_alexnet -example_args=-target=CL,-enable-tuner,-type=f32 -iterations=100 -instruments=opencl_timer_ms OpenCL - F32 (Wall clock time): ./benchmark_graph_alexnet -example_args=-target=CL,-enable-tuner,-type=f32 -iterations=100 benchmark_graph_mobilenet, benchmark_graph_alexnet) If ACL has been built with this flag, the developer can find all the graph examples ready to be benchmarked under build/tests (e.g. The flag I am referring to is By default, the flag is set to true.

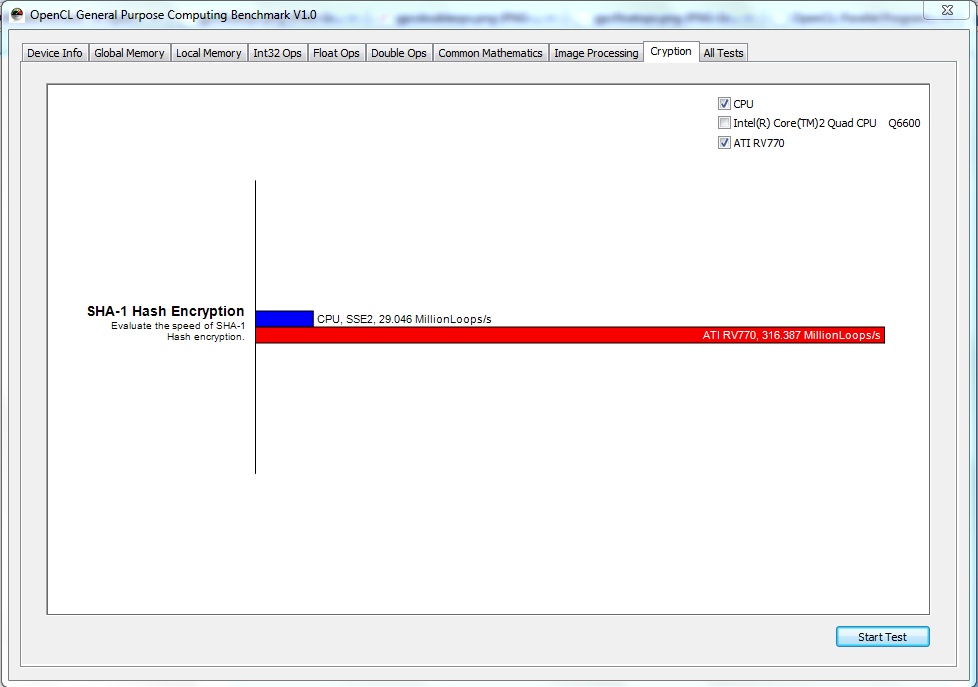

In ACL there is a flag for building the graph examples (the ones in the examples/ folder) for benchmarking. Here we discuss the Introduction, overviews, examples with code implementation.Sorry for the slow response - for ACL you'll get the best response on their github issues page: īut I've consulted an ACL expert, and yes it is possible to profile OpenCl kernel performance through ACL. Therefore, in this article, we have seen how tensor flow is acted on OpenCL. As a standard, it contains all of the necessary parts, namely run-time code creation and sufficient support for heterogeneous computing. REG2(UnaryOp, SYCL, "Sqrt", functor::sqrt, float, double) REG3(UnaryOp, GPU, "Sqrt", functor::sqrt, float, Eigen::half, double) REG5(UnaryOp, CPU, "Sqrt", functor::sqrt, float, Eigen::half, double, Let’s see the sample code for registration All of it is single-source C++ when using SYCL, therefore it’s possible to integrate the SYCL back-end to TensorFlow in a non-intrusive way. TensorFlow to OpenCL translation would necessitate scribbling the kernels in OpenCL C and distinct codebases, both of which would be difficult to maintain. An OpenCL system is divided into host and device components, with host software developed in a general programming language like C or C++ and generated for running on a host CPU using a normal compiler. OpenCL allows a wide range of accelerators to be used, involving multi-core CPUs, GPUs, DSPs, FPGAs, and specialized hardware like inferencing engines. Print("Time done:", datetime.now() - startTime) Print("Shape:", shape, "Device:", d_name) With tf.Session(config=tf.ConfigProto(log_device_placement=True)) as session: Ran_matrix = tf.random_uniform(shape=shape, minval=0, maxval=1)ĭ_operation = tf.matmul(ran_matrix, tf.transpose(ran_matrix)) > he1 = tf.constant('Hi, TensorFlow world!') This line-up will build a new context manager, instructing TensorFlow to use the GPU to accomplish those tasks. With the following command, you may perform a big set of roughly 1500 tests:īazel test -test_lang_filters=cc,py -test_timeout 1500 -verbose_failures -jobs=1 -config=sycl -config=opt - //tensorflow/. It’s a good idea to run the tests to ensure TensorFlow was constructed successfully. Sudo apt install git cmake gcc build-essential libpython3-all-dev ocl-icd-opencl-dev opencl-headers openjdk-8-jdk python3 python3-dev python3-pip zlib1g-dev TensorFlow is based on the Eigen linear algebra C++ library. TensorFlow now includes OpenCL support, which can be implemented using SYCL, thanks to Codeplay.

To add OpenCL support to TensorFlow, we need to use ComputeCpp to create an OpenCL version of TensorFlow. $ singularity exec -rocm -bind /etc/OpenCL library://sylabs/demo/blend blender Using the container that has been provided to the Sylabs library, you can run Blender as a graphical programme that will use a local Radeon GPU for OpenCL compute: Machine learning has been proposed as a solution to this issue.īlender’s most recent versions support OpenCL rendering. Because the relative rates of processes fluctuate among the devices, this creates a dilemma in selecting how to partition the work. Because OpenCL allows workloads to be shared by CPU and GPU while running the same programs, programmers can take advantage of both by dividing work across the devices. Higher-level frameworks and compilers are increasingly using OpenCL as an acceleration target. TensorFlow Lite falls back to OpenGL ES if OpenCL isn’t available, although most mobile GPU vendors supply OpenCL drivers, even if they aren’t exposed to Android app development directly. Over OpenGL ES acceleration, OpenCL provides a 2x inferencing speedup. OpenCL is a standard parallel computing standard for event and data-based parallelism. SYCL is an easy free, cross-platform C++ abstraction layer, while OpenCL(Open Computing Language) is a framework for building applications that execute across heterogeneous platforms. We’re working on adding support for OpenCLTM devices to the TensorFlow framework using SYCLTM to give developers access to a wider range of processors.

TensorFlow is a machine learning algorithm execution framework based on artificial intelligence concepts.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed